You spent three weeks in Cursor. Maybe it was Bolt or Lovable — doesn’t matter. You have a working CBT journaling chatbot, and now therapy chatbot compliance is suddenly the question you can’t ignore.

Real users are messaging it about their anxiety, their insomnia, their worst days. It responds with warmth and CBT-style prompts. Then an advisor, an investor, or a Reddit commenter asked the question you’d been avoiding: is this thing HIPAA compliant? And you realized you had built the product before fully mapping the compliance obligations around it.

Here’s the reality. Mental health apps built by founders who genuinely care about access to care are among the most important products being built right now. The compliance obligations surrounding them are also among the most intricate in all of digital health — because a therapy chatbot sits at the intersection of health law, clinical ethics, crisis liability, AI regulation, and consumer protection simultaneously. That complexity isn’t a reason not to build. It’s a reason to understand exactly what you’ve built and what it obligates you to do.

This piece maps every compliance layer a therapy chatbot founder needs to understand. No legal jargon without a plain-English explanation. No vague “consult a lawyer” hand-waving without telling you what to ask the lawyer about. And a checklist at the end you can work through item by item.

| Quick Question: What are the compliance obligations for a therapy chatbot built with AI?

Quick Answer: A therapy chatbot built with AI coding tools like Cursor sits at the intersection of five regulatory frameworks: FDA software classification, HIPAA (if handling protected health information), FTC rules on health claims and data breaches, state mental health licensing law, and crisis safety liability. Your obligations depend on what your app does, what it claims, and who uses it; but every founder must at minimum address crisis escalation, accurate disclaimers, and data privacy before launch. |

Key Takeaways:

- Your chatbot’s FDA classification depends on what it does and what you claim — calling it a “wellness app” while marketing it as “AI therapy” puts you in the highest-risk regulatory position.

- HIPAA may or may not apply to your app, but the FTC Health Breach Notification Rule almost certainly does — “I’m not a covered entity” is not a safe harbor from federal health data obligations.

- Crisis safety is the most urgent compliance gap and the fastest to close — implement 988 integration and safe messaging guardrails before any significant user acquisition.

- First Question: Is Your Chatbot a Wellness App or a Medical Device?

- Does Your Therapy Chatbot Need to Be HIPAA Compliant?

- The Full Compliance Matrix: Every Layer a Therapy Chatbot Founder Must Know

- The Crisis Liability Problem — The One Nobody Talks About Until It’s Too Late

- The AI-Specific Compliance Layer: What Changes Because You Used an LLM

- The Therapy Chatbot Compliance Checklist

- What to Do If You’ve Already Launched

- Why Choose Topflight for Mental Health App Development

- Build Like You Care — Because You Do

First Question: Is Your Chatbot a Wellness App or a Medical Device?

The answer depends not on what you think your product is, but on what it does and what you claim it does.

FDA’s general wellness exemption covers apps that promote healthy behaviors — think meditation timers, mood journaling without diagnostic scoring, or breathing exercises — without making claims about treating or diagnosing a condition.

The moment your chatbot screens for depression, assesses suicide risk, delivers CBT-based therapeutic interventions, or describes itself as a therapy tool in marketing copy, you’re likely outside that exemption and inside the FDA’s Software as a Medical Device (SaMD) framework.

Here’s a three-question test. If the answer to any of these is yes, you need to seriously evaluate your mental health chatbot FDA regulation status:

- Does the app assess, diagnose, or screen for a specific mental health condition — depression, anxiety disorder, PTSD, or anything in the DSM-5?

- Does the app deliver therapeutic interventions (CBT exercises, exposure therapy prompts, behavioral activation protocols) positioned as treatment rather than general wellness content?

- Does the app’s marketing, UI copy, or App Store description characterize it as a therapy tool, a mental health treatment, or clinical support?

The answer determines whether you operate with minimal regulatory overhead or enter a 12–24 month premarket review process.

The three FDA tiers relevant to mental health apps break down like this.

General Wellness App (No FDA Oversight)

Your app promotes healthy behaviors without clinical claims.

Examples:

- mood journaling with no diagnostic scoring

- guided meditation prompts

- stress reduction exercises with no screening component

The key test is whether a reasonable person would interpret your product as treating a mental health condition. If the answer is no — and your marketing supports that answer — you’re likely in the general wellness category.

This is the lightest regulatory path, but it constrains what your product can do and say.

Clinical Decision Support (CDS) — May Be Exempt

If your chatbot is designed to support a licensed therapist’s clinical decision-making — not to serve patients directly as a standalone intervention — it may qualify for the CDS exemption under the 21st Century Cures Act.

The critical requirement: the software must display the basis for its recommendations, and the clinician must be able to independently review and override them.

A chatbot that gives therapists session prep summaries and suggests discussion topics might qualify. A chatbot that autonomously delivers psychotherapy to patients does not.

Software as a Medical Device (SaMD) — FDA Regulation Applies

Software intended to diagnose, treat, prevent, or mitigate a disease or condition. A cognitive behavioral therapy (CBT) chatbot marketed as a treatment for anxiety or depression, any tool that performs PHQ-9 screening or GAD-7 scoring, or anything described as a digital therapeutics product almost certainly falls here.

FDA requires a pre-submission meeting and likely a 510(k) or De Novo pathway. Budget 12–24 months minimum from first submission to clearance.

The most common founder mistake here is a mismatch. You call the product a “wellness app” in your privacy policy and terms of service while marketing it as “your AI therapist” or “AI-powered anxiety treatment” on the App Store.

FDA and FTC both evaluate the totality of your claims — the marketing, the UI copy, the onboarding screens, and the product’s actual behavior. A disclaimer doesn’t override a homepage headline.

If you’re building conversational AI in healthcare, the classification question isn’t optional — it’s foundational.

Does Your Therapy Chatbot Need to Be HIPAA Compliant?

Maybe — and the “maybe” is more dangerous than a clear yes or no. This is where mental health app HIPAA compliance gets genuinely confusing, because the answer depends on your business model, not your product features.

HIPAA applies when you are a covered entity (a healthcare provider, health plan, or clearinghouse) or a business associate of one.

- If your chatbot collects information about a user’s mental health — their diagnoses, symptoms, medications, treatment history — and you have relationships with covered entities, HIPAA applies.

- If you’re a direct-to-consumer app with no provider relationships, HIPAA may not apply to you directly. But that doesn’t mean you’re in the clear. The FTC Health Breach Notification Rule almost certainly applies, and it covers mental health data even when HIPAA doesn’t.

Here are the three scenarios founders actually find themselves in.

Scenario A — Pure DTC Wellness App (No Provider Relationship)

HIPAA does not apply directly. But the regulatory exposure is still real. The FTC Health Breach Notification Rule requires you to notify users and the FTC if you experience a breach involving health data — and the definition of “health data” is broad.

FTC Act Section 5 covers unfair or deceptive trade practices, which means your privacy policy better match your actual data practices. State privacy laws — California’s CPRA, Washington’s My Health My Data Act, and others — layer additional obligations depending on where your users live.

You still need encryption, a legitimate privacy policy, secure data handling, and real terms of service.

Scenario B — App Used by or Alongside Licensed Therapists

This is the scenario most founders underestimate. If a licensed therapist, a licensed clinical social worker (LCSW), or any other licensed mental health provider uses your app with their patients, or if you facilitate clinical relationships through your platform, you are likely a business associate under HIPAA.

That means you need a business associate agreement (BAA) executed with every therapist or practice using your platform. It means protected health information (PHI) protections:

- encryption at rest and in transit

- audit logs

- access controls

- a breach notification plan

This compliance layer is non-trivial.

Scenario C — You Are the Provider (Telemental Health Platform)

If your platform employs or contracts licensed therapists and facilitates treatment directly, you are a covered entity. Full HIPAA compliance is required — not just the technical safeguards, but administrative and physical safeguards, workforce training, a designated privacy officer, and ongoing risk assessments. This is the highest-obligation tier and it requires dedicated compliance infrastructure, not a weekend project.

Don’t assume “I’m not a covered entity” is a safe harbor. The FTC Health Breach Notification Rule covers health information collected by non-HIPAA entities. Many founders discover this only after a breach — when it’s too late to retroactively comply.

For a deeper dive on the technical implementation side, see our guide on HIPAA compliant app development.

Not sure which scenario your product falls into? Topflight can map your architecture to the right compliance obligation tier. Talk to our team.

The Full Compliance Matrix: Every Layer a Therapy Chatbot Founder Must Know

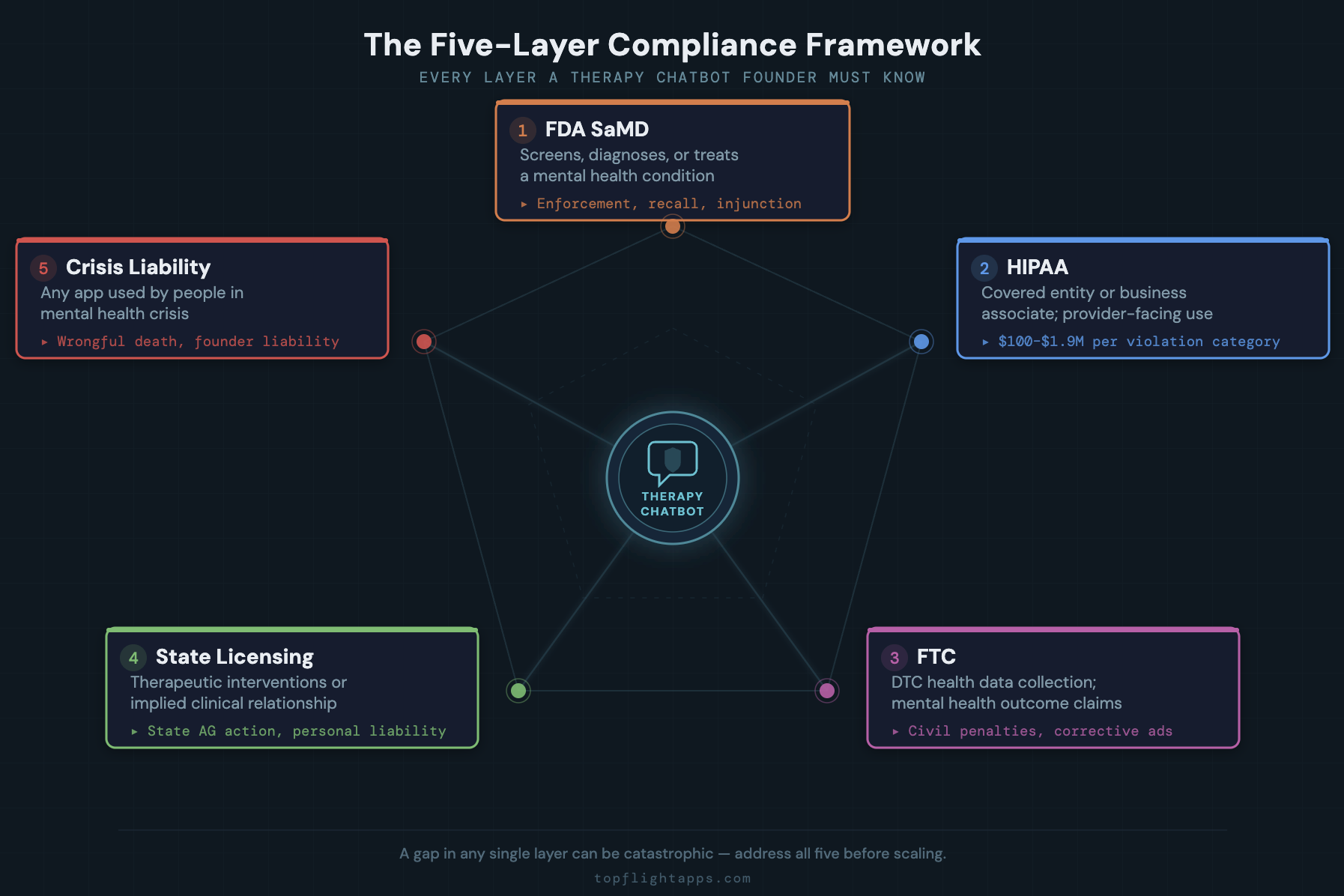

A therapy chatbot doesn’t face one compliance framework — it faces five simultaneously. Understanding the AI therapy app legal requirements means mapping each layer, knowing what triggers it, and knowing what happens if you ignore it. This matrix is the reference table.

| Layer | Triggered When | Key Obligation | Worst-Case If Ignored |

| HIPAA | Covered entity or business associate relationship; provider-facing use | Encryption, audit logs, BAAs, breach notification, annual risk assessment | OCR investigation, $100–$1.9M per violation category |

| FDA SaMD | App screens, diagnoses, or treats a mental health condition | Pre-market submission (FDA 510(k) or De Novo), quality management system, post-market surveillance | Enforcement action, mandatory recall, injunction against marketing |

| FTC | Any DTC app collecting health data; any claims about mental health outcomes | Substantiated efficacy claims; health breach notification; no deceptive privacy practices | FTC enforcement action, civil penalties, mandatory corrective advertising |

| State Licensing Law | App provides therapeutic interventions or implies a clinical relationship | Avoid unauthorized practice of psychology or medicine; clear disclaimers; no diagnosis language | State AG action for unauthorized practice; personal founder liability |

| Crisis Liability | Any app used by people in mental health crisis — which is nearly all of them | Safe messaging guidelines; 988 integration; escalation pathways; no harm facilitation | Wrongful death lawsuit; reputational destruction; personal founder liability |

Five layers. Each with its own enforcement body, its own penalties, and its own triggering conditions. The challenge for founders isn’t that any single layer is impossible — it’s that you need to address all five, and a gap in any one can be catastrophic.

If you’re navigating AI in healthcare compliance more broadly, the matrix above applies to most clinical AI products, but the crisis liability layer is uniquely acute for mental health.

The Crisis Liability Problem — The One Nobody Talks About Until It’s Too Late

A therapy chatbot will encounter users in crisis. This is not a hypothetical. A depression app attracts people who are depressed. An anxiety app is used by people at their worst moments. A behavioral health app with any meaningful user base will receive messages expressing suicidal ideation, self-harm, or acute psychological distress — whether or not the product was designed for those conversations.

This creates a legal and ethical obligation that no amount of disclaimers can fully discharge. Here is what that obligation actually looks like.

Safe Messaging Guidelines

The Suicide Prevention Resource Center (SPRC) and the American Foundation for Suicide Prevention (AFSP) publish safe messaging guidelines for communicating about suicide.

Your AI chatbot’s responses to crisis content must align with these guidelines — no detailed discussion of methods or means, no romanticization of self-harm, no minimization of distress.

These aren’t technically a statutory requirement, but deviation from established safe messaging guidelines is routinely used as evidence of negligence in civil litigation. A plaintiff’s attorney will put your chatbot’s crisis response next to the SPRC guidelines and ask a jury why they differ.

Mandatory Crisis Escalation

Your chatbot must have a documented, tested crisis intervention pathway for users who express suicidal ideation or acute crisis. At minimum: surface the 988 Suicide and Crisis Lifeline immediately, clearly, and prominently whenever crisis language is detected.

Ideally: provide a live handoff mechanism to a licensed clinician if your platform supports provider relationships. The chatbot should never be the final point of contact for a crisis disclosure. Ever.

What Your LLM Cannot Do

The underlying model — whether GPT-4/5, Claude, Llama, or any other large language model (LLM) — is not a crisis counselor. It is not trained as one. It can generate plausible, empathetic-sounding crisis responses that are clinically inappropriate, factually wrong, or actively harmful.

Hallucination in a crisis context isn’t an edge case to manage — it’s a patient safety risk. Your compliance obligation includes implementing AI safety guardrails specifically for crisis scenarios, testing those guardrails systematically across representative crisis disclosures, and documenting that testing.

Woebot, Wysa, and other established mental health chatbots employ entire clinical teams dedicated to reviewing and refining crisis response protocols. If you built your product in three weeks with an AI coding tool, your crisis protocol needs dedicated attention before launch — not after your first incident.

Terms of Service Limitations

Your terms of service cannot disclaim your way out of crisis liability. Courts have consistently held that exculpatory clauses do not shield against gross negligence. If your chatbot responds to a user disclosing suicidal ideation in a way a reasonable person would consider harmful, and harm results, the words “this is not a medical device” in your ToS will not protect you. Duty of care exists independent of contractual language.

The broader conversation around gen AI in healthcare often focuses on efficiency and access. In mental health specifically, the crisis liability dimension deserves equal weight.

The AI-Specific Compliance Layer: What Changes Because You Used an LLM

Every compliance obligation above applies to any AI mental health tool — whether hand-coded or built with Cursor. Building on a large language model adds a distinct layer of risks that traditional health app compliance frameworks weren’t designed to address.

If you’ve got a therapy chatbot built with Cursor, Bolt, or any AI coding tool and you’re using an LLM as the conversational backend, these are the additional obligations.

Hallucination in a Clinical Context

LLMs generate plausible-sounding text. That’s what they do. In a general consumer product, a hallucinated fact is an annoyance. In a therapy context — when a user asks whether their medication has interactions, whether their symptoms are consistent with a diagnosis, or whether intrusive thoughts are “normal” — a confidently stated, completely incorrect answer can cause real clinical harm.

Your compliance obligation includes building a systematic evaluation protocol for your chatbot’s responses across a representative range of clinical scenarios, not just manual spot-checking during development.

Prompt Injection and Data Exfiltration

A sophisticated user — or a malicious one — can use prompt engineering techniques to cause your chatbot to reveal its system prompt, behave outside its intended guardrails, or in a poorly architected system, access other users’ conversation data.

In a therapy context where conversations contain deeply sensitive mental health disclosures, a prompt injection vulnerability isn’t just a product bug. It’s a potential HIPAA breach vector and a massive liability exposure.

Your LLM Provider’s BAA Status

If your chatbot processes protected health information and you’re routing that data through OpenAI, Anthropic, Google, or any other LLM API provider, you need a BAA with that provider.

- OpenAI offers a BAA for healthcare use cases through its API.

- Anthropic has a BAA pathway for Claude API enterprise customers.

- Google Cloud offers BAAs for its Vertex AI services.

Confirm BAA status before you process any real user health data — not retroactively after you’ve been sending PHI to a non-covered endpoint for six months.

For more on the specifics of LLM providers and health data, see our analysis on ChatGPT HIPAA compliance.

Model Versioning and Clinical Consistency

LLM providers update their models — sometimes with notice, sometimes without. A behavior your chatbot relied on in GPT-4-turbo might change subtly after a model version update.

For a general consumer app, that’s a product issue you catch in QA. For a clinical tool, an undocumented behavioral change that alters how your chatbot responds to a crisis disclosure is a patient safety event.

Your compliance framework must include model version pinning for production environments and a re-evaluation protocol triggered by any model update.

The Therapy Chatbot Compliance Checklist

This is the practical output. Work through every item against your current product. Items marked [CRITICAL] represent the highest-risk gaps — address these before any significant user acquisition or marketing spend.

FDA Classification

- [ ] Documented decision on whether your app is a wellness app, CDS, or SaMD — with written rationale for the classification

- [ ] Marketing copy, UI language, and App Store description reviewed for unintended clinical claims (look for “therapy,” “treatment,” “clinically proven,” “diagnose”)

- [ ] If SaMD: pre-submission meeting with FDA scheduled or completed [CRITICAL]

- [ ] If claiming CDS exemption: clinician review pathway is documented, functional, and independently reviewable

HIPAA (If Applicable)

- [ ] Written determination of whether you are a covered entity, business associate, or neither — with documented rationale

- [ ] BAAs executed with all PHI-handling vendors: cloud provider, LLM API provider, analytics tools, messaging services [CRITICAL]

- [ ] Encryption at rest and in transit confirmed for all PHI storage and transmission

- [ ] Audit logging for all PHI access implemented and tested

- [ ] Breach notification plan documented — includes 60-day OCR notification requirement

- [ ] Annual risk assessment conducted and documented

- [ ] Data retention policy defined — how long PHI is stored, when it’s purged, and how deletion is verified

HIPAA compliance mental health app requirements are extensive — if you need technical guidance, our walkthrough on HIPAA compliant software development covers the architecture requirements in detail.

FTC Compliance

- [ ] All efficacy claims in marketing are substantiated — nothing says “clinically proven” without actual clinical evidence

- [ ] Privacy policy accurately reflects your real data collection and sharing practices (not a template you copied)

- [ ] FTC Health Breach Notification Rule compliance confirmed if you collect health data outside HIPAA coverage

- [ ] Subscription terms and cancellation process disclosed clearly — this is an active FTC enforcement priority for DTC health apps

- [ ] User consent mechanisms for health data collection are explicit and documented — not a pre-checked box or a buried ToS clause

State Licensing

- [ ] Legal review confirms your product does not constitute unauthorized practice of psychology or medicine in your operating states

- [ ] Disclaimers present and prominent: “not a substitute for professional mental health care,” “not a medical device”

- [ ] If facilitating licensed therapist relationships: therapist licensing confirmed in each state of operation

- [ ] Informed consent workflow documented for any clinical or quasi-clinical feature

Crisis Safety (CRITICAL — Complete Before Launch)

- [ ] Crisis detection logic implemented and tested across representative crisis disclosure scenarios [CRITICAL]

- [ ] 988 Suicide and Crisis Lifeline surfaced in-app whenever crisis language is detected [CRITICAL]

- [ ] Safe messaging guidelines reviewed and reflected in chatbot response parameters

- [ ] No chatbot responses discuss method, means, or detailed content around self-harm

- [ ] Crisis escalation pathway to a licensed clinician exists if your platform has provider relationships

- [ ] Crisis response logic re-tested after every model update or system prompt change

AI-Specific Safeguards

- [ ] LLM provider BAA in place if processing PHI

- [ ] Model version pinned — not using an auto-updating endpoint for production clinical conversations

- [ ] Systematic evaluation of chatbot responses across a clinical scenario library (not just spot-checking)

- [ ] Prompt injection testing completed against representative attack vectors

- [ ] System prompt reviewed by a licensed mental health professional before launch

Topflight builds HIPAA-compliant mental health and behavioral health applications. If you need a compliance architecture review, a technical build, or help closing gaps in an existing product, talk to our team.

What to Do If You’ve Already Launched

If your therapy chatbot is live with real users and you’re reading this after the fact, don’t panic — but don’t delay either. Here is the triage order, ranked by risk and urgency.

First: Crisis Safety

Close any gap in crisis detection and escalation before anything else. This is your highest-liability exposure and the fastest to address. Update your system prompt to handle crisis disclosures safely, integrate the 988 Lifeline as your primary crisis hotline, and test against at least a dozen representative crisis scenarios. Do this today.

Second: Determine Your FDA Classification

If your marketing makes clinical claims and your app has meaningful user volume, get a regulatory and legal opinion before you scale further. The cost of AI in healthcare compliance goes up exponentially if you need to reclassify and resubmit after reaching significant scale.

Third: Execute Your BAAs

Contact your LLM API provider, cloud provider, and any analytics vendor that touches user data. Most have standard BAA processes — OpenAI, Anthropic, AWS, and GCP all offer them. Start this process this week.

Fourth: Audit Your Marketing

Review your App Store description, landing page copy, and any paid ads for unintended clinical claims. Pull or rewrite anything that says “therapy,” “treatment,” or “clinically proven” unless you can substantiate it with evidence.

Fifth: Document Everything

Write down the decisions you’ve made, the rationale behind them, and the date you made them. If OCR or the FTC ever investigate, they reward demonstrated good-faith process and intent — not perfection. A founder who can show a documented compliance decision trail is in a fundamentally better position than one who can’t.

Why Choose Topflight for Mental Health App Development

Topflight builds HIPAA-compliant behavioral health applications — including AI-powered chatbots, telemental health platforms, and CBT-based digital therapeutics tools. We work at the intersection of technical architecture and regulatory compliance that’s specific to mental health, including understanding how will AI help change EHR integration for behavioral health workflows.

What we bring to mental health app founders:

- FDA classification guidance — helping you determine whether you’re a wellness app, CDS, or SaMD, and building accordingly

- HIPAA-compliant architecture — encryption, audit logging, BAA management, and breach response planning built into the infrastructure

- Crisis safety protocol design — LLM guardrail implementation, safe messaging compliance, and 988 integration

- Clinical workflow integration — licensed provider relationships, EHR connectivity, and informed consent workflows

- Compliance gap analysis — for AI-built apps that shipped fast and need a professional audit before scaling

Built something in Cursor that you’re not sure is compliant? Get a free architecture review from a team that has shipped mental health apps in regulated environments.

Build Like You Care — Because You Do

A therapy chatbot is one of the most valuable things a founder can build right now — and one of the most legally complex. The compliance surface spans five distinct regulatory frameworks, each with real enforcement mechanisms and real consequences. If you’re asking, “Is my therapy app HIPAA compliant?”, you’re already asking the right question — but HIPAA is only one of five layers you need to address.

None of that should stop you from building. It should make you build deliberately.

The checklist above is the map. Work through it systematically. Close the crisis safety gaps first. Get legal eyes on your FDA classification before you invest in growth. And if the compliance surface feels overwhelming, remember: you don’t have to figure it out alone. There are teams — including ours — that do this every day.

Frequently Asked Questions

Does a therapy chatbot need to be HIPAA compliant?

It depends on your business model, not your product features. If you have relationships with covered entities — healthcare providers, health plans, or clearinghouses — or if therapists use your product with patients, HIPAA likely applies. If you’re purely direct-to-consumer with no provider relationships, HIPAA may not apply, but the FTC Health Breach Notification Rule almost certainly does.

Is a CBT chatbot considered a medical device by the FDA?

If it delivers CBT interventions positioned as treatment for a diagnosable condition like anxiety or depression, it likely qualifies as Software as a Medical Device (SaMD). A chatbot that offers general wellness content — mindfulness exercises, mood journaling without clinical scoring — may fall under the general wellness exemption. The classification depends on both functionality and marketing claims.

What happens if someone uses my app in a crisis?

You have a legal and ethical obligation to provide a safe response. At minimum, your app must surface the 988 Suicide and Crisis Lifeline when crisis language is detected. Your chatbot should never be the last point of contact for a crisis disclosure, and its responses must align with safe messaging guidelines. Failure to implement crisis safeguards creates significant negligence liability.

Can I use GPT-4 or Claude in a HIPAA-compliant therapy app?

Yes, but only with a BAA in place. OpenAI offers BAAs for its API healthcare customers. Anthropic provides a BAA pathway for Claude API enterprise users. You must confirm BAA status before routing any protected health information through the API. Using a consumer-tier plan without a BAA while processing PHI is a HIPAA violation.

What disclaimers does a mental health app need?

Do I need a BAA with OpenAI or Anthropic for my health app?

If your app transmits protected health information to the LLM API — meaning user messages contain diagnoses, symptoms, medication information, or other PHI — yes, you need a BAA. Both providers offer BAA pathways for qualifying customers. If you’re using the API for non-PHI interactions only and have architecture that ensures PHI never reaches the LLM endpoint, a BAA may not be required, but document that architectural decision.

What is the general wellness exemption and does my therapy app qualify?

The FDA’s general wellness exemption applies to software that promotes healthy lifestyle behaviors without making claims about diagnosing, treating, or preventing a specific disease or condition. Meditation apps, mood journaling without diagnostic scoring, and stress reduction tools generally qualify. The moment your app claims to treat anxiety, screen for depression, or deliver therapeutic interventions, you’re likely outside the exemption.

Can my terms of service protect me from liability if a user is harmed?

Not fully. Courts have held that exculpatory clauses in terms of service do not protect against claims of gross negligence. If your chatbot responds to a crisis disclosure in a way a reasonable person would consider harmful, and harm occurs, your ToS will not shield you. Terms of service are one component of your legal posture — not a liability firewall.