Microsoft retired PowerScribe 360 in March 2026, the reporting platform 80% of US radiologists rely on. Migration to PowerScribe One is forced and widely resented. If you’re building AI medical image documentation, the disruption window is open right now.

It’s also a generational shift. Voice dictation (the PowerScribe 360 era) had radiologists narrate every finding. Ambient AI dictation (Dragon Copilot, PowerScribe Smart Impression) transcribed clinician speech. The third generation generates a structured draft from the image itself. The radiologist becomes a reviewer, not an author.

The infrastructure for this (DICOM ingestion, AI inference, structured report generation, FHIR write-back) is production-ready in 2026. A chest X-ray AI demo is a weekend project. A production system that radiologists use daily, that passes FDA review, writes signed reports into the EHR, and handles DICOM PHI correctly is a fundamentally different engineering problem. This guide covers that gap for teams building against radiology, pathology, ophthalmology, and the Sectra/PowerScribe incumbents.

How do you build an AI-assisted medical image documentation system?

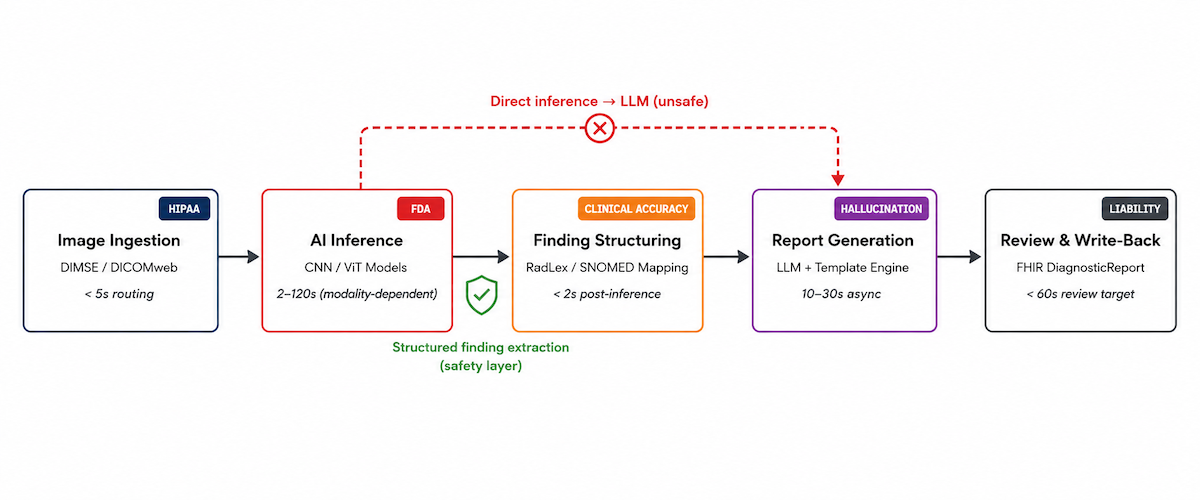

Build it as a five-stage pipeline: DICOM ingestion, AI inference, structured finding extraction, LLM-driven report generation, and radiologist review with FHIR DiagnosticReport write-back. The structured finding extraction step between inference and report generation is the safety architecture that prevents hallucinated clinical entities. The radiologist review UI and specialty-specific report templates are where products differentiate; the DICOM pipeline and compliance infrastructure are the entry ticket.

Key Takeaways:

- A chest X-ray AI demo is a weekend project. A production system is a fundamentally different engineering problem. The gap is DICOM pipeline architecture, radiologist review UX, FDA classification, and EHR integration. Every design decision in a production product supports the radiologist review workflow, not the AI model showcase.

- The AI generates the draft. The radiologist signs the report. Two-step inference → structuring → report generation is the safety layer. Finding-to-image linking in the review UI is the trust layer. Specialty-specific templates are the differentiation layer. These three are where products win.

- DICOM pipeline and HIPAA/FDA compliance are the entry ticket, not the product. Get those right first. Differentiated products are built on the review UX and specialty depth, not by claiming better AI accuracy.

Table of Contents

- What AI-Assisted Medical Image Documentation Is

- The Five-Stage Technical Pipeline: Where the Hard Problems Live

- DICOM Integration and Image Ingestion: The Foundation Layer

- AI Inference Is Where the FDA Risk Lives

- Specialty-Specific Report Formats: Where Generalist Products Fail

- EHR and PACS Integration: Writing Reports Where Clinicians Work

- HIPAA Compliance for Medical Imaging AI: DICOM PHI Is Different

- FDA Classification for AI Imaging Documentation: A Tiered Reality

- Build-vs-Buy Decision Matrix: What to Build, What to Use as an API

- The AI Imaging Documentation Build Checklist

- Why Choose Topflight for AI Medical Image Documentation Development

What AI-Assisted Medical Image Documentation Is

AI-assisted medical image documentation generates a structured clinical report from a medical image study and routes it for specialist review and EHR write-back.

Three generations got here. Voice dictation integration (PowerScribe 360, Dragon Medical): radiologist authored everything. Ambient AI dictation (PowerScribe Smart Impression, Dragon Copilot, conversational AI in healthcare): still speech-driven. AI-assisted radiology reporting generates the draft from the image itself. Sectra RIS, PowerScribe One, and startups are racing to own this category.

The Five Functions of an AI Imaging Documentation System

To build an AI-powered radiology platform in this generation, five functions define the system:

- Image ingestion: receive the study from PACS via DICOM/DICOMweb, parse metadata, route by modality.

- AI inference: detect findings, segment structures, classify pathology, assign confidence scores.

- Finding structuring: map inference outputs to a structured schema (anatomy, finding type, severity, measurement).

- Report generation: LLM or template-driven draft in the specialty’s format (findings + impression, synoptic report, grading scale).

- Review, approval, EHR write-back: radiologist reviews, edits inline, approves. Report writes to EHR (FHIR DiagnosticReport) and PACS (DICOM SR).

The Five-Stage Technical Pipeline: Where the Hard Problems Live

Each stage of an automated medical imaging documentation pipeline has a different dominant hard problem. Not a different feature challenge. A different category of risk: HIPAA at ingestion, FDA at the AI inference pipeline, clinical accuracy at structuring, hallucination at generation, legal liability at review. The table maps all five.

| Stage | Input | Processing | Output | Max Latency | Primary Risk |

|---|---|---|---|---|---|

| 1. Image Ingestion | DICOM study from PACS/VNA | DICOM parsing, metadata extraction, modality routing, pixel normalization | Preprocessed image tensor + structured metadata | < 5s for routing; modality-dependent for preprocessing | HIPAA – DICOM files contain PHI in header tags; de-identification required for any non-clinical use |

| 2. AI Inference | Preprocessed image tensor | CNN/ViT model(s); detection, segmentation, classification; confidence scoring | Bounding boxes, segmentation masks, finding labels, confidence scores | < 2s for X-ray; 30–120s for CT/MRI | FDA – inference output that drives clinical action is the SaMD risk event; confidence thresholds determine classification tier |

| 3. Finding Structuring | Raw inference output | Anatomy ontology mapping; SNOMED/RadLex term lookup; measurement calculation | Structured finding JSON: {anatomy, finding, severity, measurement, confidence, source_slice} | < 2s post-inference | Clinical – structuring errors compound through report generation; a mis-mapped anatomy term produces a factually wrong report |

| 4. Report Generation | Structured finding JSON + prior reports + clinical context | LLM or template engine; specialty-specific report format; impression generation | Draft structured report: findings + impression + structured data fields | 10–30s (async acceptable) | Hallucination – LLM can introduce clinical findings not present in AI inference output |

| 5. Review & Write-Back | Draft report + original images | Radiologist review UI; inline editing; approval workflow; FHIR DiagnosticReport generation | Finalized signed report in EHR; DICOM SR optionally written back to PACS | < 60s review target (product goal) | Liability – signed report is a legal clinical document; review UX determines whether the physician meaningfully reviews or rubber-stamps |

Two-Step Inference Is the Safety Architecture

The most dangerous architectural shortcut in AI imaging documentation: piping AI inference output directly into an LLM for report generation without a structured finding extraction step in between.

The result reads well. The problem is clinical: measurements, anatomical locations, and severity descriptors inferred by the LLM from context, not measured from the image. Hallucination in clinical AI is the default failure mode when the architecture skips structured finding extraction.

The fix is the two-step architecture: inference → structured finding JSON → LLM-generated report from the structured JSON. The structured finding step constrains what the LLM can say. Every clinical entity in the report traces to a specific inference output with a confidence score and a source image slice. That traceability is the safety layer, not an optional refinement.

DICOM Integration and Image Ingestion: The Foundation Layer

Every AI imaging documentation product lives or dies on its DICOM integration. Clinical imaging data lives in PACS, VNAs (Vendor Neutral Archives), and RIS/PACS combinations communicating via DICOM network protocols.

DICOM Ingestion Architecture

Two protocol families, and you probably need both:

- DIMSE (C-STORE, C-FIND, C-MOVE): the traditional DICOM protocol suite for image transfer and query. Required for legacy PACS (most enterprise installations); your platform needs a DICOM-compliant Storage SCP. Non-negotiable for enterprise deployment.

- DICOMweb (WADO-RS, STOW-RS, QIDO-RS): the RESTful DICOM standard. Preferred for cloud-native architectures and newer PACS/VNA systems. Dramatically simpler than DIMSE.

DICOM metadata extraction requires parsing at minimum: PatientID (0010,0020), StudyInstanceUID (0020,000D), SeriesInstanceUID (0020,000E), Modality (0008,0060), BodyPartExamined (0018,0015), StudyDescription (0008,1030), and pixel data (7FE0,0010). The DICOM worklist integration determines how studies route into your pipeline, and every DICOM tag carries potential PHI.

Modality routing is not optional. A chest X-ray and brain MRI need entirely different AI models, preprocessing pipelines, and report templates. Route by modality code (CT, MRI, CR, DX, SM for whole-slide pathology, OP for ophthalmic photography) and body part. This is medical device integration software at the protocol level.

DICOM de-identification per PS3.15 Annex E: 18 HIPAA Safe Harbor identifiers live across hundreds of DICOM tags. Failure to de-identify before sending to a non-BAA cloud service is a HIPAA violation. The part most builders miss: DICOM pixel data can contain burned-in PHI (patient name, DOB, MRN overlaid on image pixels, not just in the header). Common in older ultrasound, fluoroscopy, and nuclear medicine. In a 415,000-image validation study, 1.5% contained burned-in text; only 10 false negatives with OCR-based detection (Springer 10.1007/s10278-024-01098-7). Header de-identification alone is insufficient.

Pixel Preprocessing by Modality

AI model input requirements vary by modality:

- X-ray (CR/DX): DICOM pixel data to 16-bit grayscale PNG; windowing/leveling to standard chest or bone windows; resize to model input (typically 512×512 or 1024×1024).

- CT: volumetric 3D tensor from axial DICOM series stack. HU windowing by anatomy (lung window: WL −600 HU, WW 1500; soft tissue: WL 40, WW 400). Slice thickness normalization.

- MRI: multi-sequence input (T1, T2, FLAIR, DWI as separate channels). Sequence registration for multi-sequence models. Intensity normalization required (MRI pixel values are not standardized across vendors, unlike CT HU).

- Whole-slide pathology (SM): multi-resolution pyramid (SVS, NDPI, MRXS formats). Tile extraction at appropriate magnification (20× for diagnostic, 40× for cytology). Stain normalization for H&E and IHC variations between labs (degrades model accuracy without it).

AI Inference Is Where the FDA Risk Lives

Most AI imaging products focus engineering energy on inference. Architecture choices here compound downstream into FDA classification tier, confidence threshold design, and report generation safety. AI radiology report generation quality starts at model selection, but the regulatory consequences propagate far past it.

Model Architecture Patterns by Task

Four patterns, each with a different output type and downstream implication:

- Detection models (finding localization): YOLO, RetinaNet, or Faster R-CNN variants. Output: bounding box + class label + confidence score per finding. Use for focal findings (nodule, fracture, mass, hemorrhage). Convolutional neural network (CNN) architectures dominate for single-frame modalities.

- Segmentation models (structure delineation): U-Net and variants (nnU-Net, Swin-UNet). Output: pixel-level mask. Required for medical image segmentation tasks like volumetric measurement (tumor size, organ volume).

- Classification models (study-level or finding-level labels): EfficientNet, ResNet, vision transformer (ViT) variants. Whole-study classification (normal vs. abnormal) or finding-level labels. Simpler to build than detection, less structured output for report generation. Anomaly detection at the study level falls here.

- Foundation models for medical imaging: MedSAM (segmentation), BioViL-T (chest X-ray), MedGemma (Google, May 2025; 4B and 27B variants, open weights on Hugging Face), MedSigLIP (400M-param image encoder powering MedGemma; DeepHealth uses it for chest X-ray triaging). MedLM (Google Cloud, formerly Med-PaLM) for text. Earlier multimodal AI models like GPT-4V demonstrated the feasibility, but production deployments now target the GPT-5 family or self-hosted alternatives. Honest tradeoff: Google explicitly positions MedGemma as a research tool requiring fine-tuning and clinical validation. Foundation models are not plug-and-play for gen AI in healthcare production.

Confidence Thresholds Are a Regulatory Decision Before a Clinical One

Confidence scores are the safety interface between model and clinician. Three threshold tiers:

- High-confidence positive: findings above this threshold display prominently in the report draft with source image slice reference. Set conservatively to minimize false positives.

- Low-confidence flag: findings between low and high thresholds flagged for explicit radiologist attention, not silently included or excluded.

- Below-threshold suppression: findings below the minimum suppressed from the report but logged for quality monitoring.

The threshold design is a regulatory decision. A system that autonomously includes findings above a confidence score threshold without mandatory clinician review functions differently from one that presents all findings for review. Your threshold architecture affects your intended use statement and FDA classification tier (Section 9).

Two-Step LLM Integration: Why Raw Inference to LLM Is the Hallucination Trap

The report generation LLM receives structured findings, not raw images. Two steps:

- Structured finding extraction from AI inference output: produce a JSON object with anatomy, finding type, severity, measurements, and source image references. Named entity recognition and NLP for radiology handle the mapping from model output to clinical vocabulary.

- Report generation from structured finding JSON: LLM with specialty-specific system prompt generates findings section and impression section. Temperature at 0.0–0.1 for clinical consistency. The LLM phrases and formats; it does not diagnose.

Model choices with BAA availability for the report generation step:

- OpenAI GPT-5 family (via OpenAI for Healthcare, BAA via baa@openai.com). Critical caveat: OpenAI BAA covers ZDR-eligible endpoints only. Image inputs are not ZDR-eligible. Image inputs to OpenAI APIs are not covered under the BAA for PHI use. For ChatGPT HIPAA compliance implications, the text-only restriction matters. Section 8 details the full scope.

- Anthropic Claude (via direct API, AWS Bedrock, Google Cloud, or Azure; each path has its own BAA). HIPAA-ready Enterprise plans available. The only major foundation model accessible through all three major cloud providers with HIPAA-compliant infrastructure.

- Azure OpenAI under Azure HIPAA BAA. Text inputs explicitly covered; image inputs not extended in published documentation.

- Self-hosted: fine-tuned open-source models (Llama 3, Mistral, MedGemma 4B/27B) for no PHI transmission off-site. Best fit for teams that need to automate clinical notes without any external data flow.

Specialty-Specific Report Formats: Where Generalist Products Fail

Most AI imaging documentation tools are built for chest X-ray and CT, the highest-volume modalities. Specialty imaging has documentation requirements that generalist products handle poorly, and this is where new entrants beat Nuance/PowerScribe and Sectra.

Radiology: Structured Templates Beat Free-Text for AI-Generated Reports

A structured radiology report uses coded, templated sections rather than free-text narrative. RSNA’s RadReport template library provides 268 templates for standardized imaging scenarios. Honest tradeoff: per the European Society of Radiology’s 2023 update, RSNA has effectively frozen new template development at radreport.org, and the IHE MRRT profile saw limited adoption. RadReport is still the best free starting point, but it is not a living standard.

For AI-generated reports, map AI findings to structured reporting template fields, not free-text. Use LOINC radiology codes for section headers (18782-3 for the findings section, 18785-6 for the impression section). Support the scoring systems clinicians expect: Fleischner Society guidelines for pulmonary nodule characterization, ACR TIRADS for thyroid, ACR BI-RADS for mammography AI reporting. CT scan documentation, MRI report generation, and X-ray AI report workflows all benefit from structured templates over narrative dictation.

Pathology: Synoptic Reporting Is Mandatory, Not Stylistic

Pathology report automation requires synoptic (checklist-based) formats per College of American Pathologists (CAP) cancer protocols. Map AI-detected findings to CAP required elements: tumor type, grade, margins, lymph node status, staging. The pathologist workflow depends on whole-slide imaging (WSI) viewer integration with annotation overlay. The pathologist must see AI findings directly on the slide, not just in a text report. Multi-stain studies (H&E primary diagnosis + IHC confirmatory stains) require synthesizing findings across stain types before report generation.

Ophthalmology: The Output Is a Grade on a Scale, Not a Narrative

Ophthalmology AI documentation produces graded severity outputs on established clinical scales: ETDRS grading for diabetic retinopathy (none, mild, moderate, severe NPDR, PDR), Humphrey Field Analyzer pattern for glaucoma, AMD grading. The report is a grade on a scale, not a narrative finding description. Fundamentally different from radiology’s findings-and-impression format.

Dermatology: Dermoscopy AI Crosses Into Clinical Decision Support

Dermoscopy AI report outputs map to structured clinical vocabularies: Breslow thickness risk stratification, ABCDE criteria documentation, dermoscopic pattern vocabulary (reticular, globular, homogeneous). The regulatory line is real: AI dermoscopy classification that recommends biopsy is clinical decision support (CDS), not documentation support. That distinction changes your FDA classification tier entirely (Section 9).

EHR and PACS Integration: Writing Reports Where Clinicians Work

An automated imaging report generation system that produces reports outside the EHR and PACS creates a workflow problem: the radiologist copy-pastes between systems, defeating the time savings. Production AI imaging documentation writes directly into both PACS (as DICOM SR) and the EHR (as FHIR DiagnosticReport). PACS integration and how AI is changing EHR workflows are inseparable from the product’s core value.

FHIR Resources for Imaging Report Write-Back

Four FHIR resources:

- FHIR ImagingStudy: references the DICOM study, links imaging procedure to patient and encounter. Use as the basedOn reference for DiagnosticReport.

- FHIR DiagnosticReport: the primary resource for imaging reports. Contains report status (preliminary → final), performing radiologist, result summary text, and references to finding Observations. This is the AI medical record summary for the imaging encounter.

- FHIR Observation: individual structured findings (nodule size, lesion location, severity grade) as discrete data elements linked to the DiagnosticReport.

- FHIR ImagingSelection (R5): published in FHIR R5 (2023). The mechanism for linking report findings to specific DICOM frames, series, or regions of interest. R4 environments use extensions or DocumentReference until R5 migration.

HL7 FHIR ImagingStudy and HL7 v2 ORU messages both remain relevant: FHIR for modern EHR integration, ORU for legacy RIS/PACS integration and older order management systems. SMART on FHIR provides the embedded launch framework for surfacing your review UI inside the EHR. For Epic Radiant integration and Cerner RadNet, how to integrate with Epic EHR and similar RIS targets, the IHE Radiology Integration Profile defines the interoperability contracts.

DICOM Structured Reports: When SR Is the Answer and When It Isn’t

DICOM SR (Structured Report) is the DICOM-native format for structured reports: a DICOM object containing coded finding data, storable alongside image series in PACS. DICOM SR Template 1500 (Measurement Report) is the standard for quantitative imaging measurements, required for compatibility with most enterprise PACS viewers.

Honest tradeoff: DICOM SR is not human-readable in the PACS viewer without SR rendering support. Confirm your target PACS supports SR display before committing. DICOM Presentation State objects handle AI-detected finding annotations (bounding boxes, segmentation overlays) linked to the image series.

Radiologist Review Queue Design

The radiologist review queue design shapes the daily radiologist workflow and determines whether an AI documentation product gets used or abandoned:

- Review time target: reviewable and approvable in under 60 seconds for normal studies. Report turnaround time (TAT) is what radiology departments measure, and over 90 seconds the product fails its value proposition.

- Side-by-side image and report view: the radiologist views source images alongside the draft in a structured report editor without switching windows. Hanging protocol integration matters.

- Finding-to-image linking: clicking any finding navigates the image viewer to the source slice and region. The most critical trust-building feature for radiologist adoption, and the one most demos skip.

- Confidence visualization: display AI confidence scores inline with findings. Low-confidence findings visually distinguished to direct attention through worklist prioritization.

- One-click approval with inline editing: edit the draft in the review UI, then approve. No switching to a separate editor.

HIPAA Compliance for Medical Imaging AI: DICOM PHI Is Different

Medical images carry HIPAA imaging PHI in two distinct locations requiring separate compliance strategies: DICOM metadata headers and burned-in pixel-level annotations. A compliance approach for text-based EHR data does not cover imaging PHI. If you’re building HIPAA compliant software development for imaging AI, this is where scope gets underestimated.

DICOM PHI Lives in Two Places: Headers and Pixels

DICOM headers contain up to 18 HIPAA Safe Harbor identifiers across hundreds of tags: PatientName (0010,0010), PatientBirthDate (0010,0030), PatientID (0010,0020), InstitutionName, DeviceSerialNumber, and more.

DICOM pixel data may contain burned-in PHI: text overlaid on image pixels by the device at capture. Common in ultrasound, older fluoroscopy, nuclear medicine.

DICOM de-identification requires both header tag removal/replacement (per DICOM PS3.15 Annex E) and OCR-based pixel-level text detection for burned-in PHI. Pseudonymization (replacing PHI with a consistent pseudonym) rather than full de-identification is appropriate for longitudinal research workflows where patient linkage must be preserved.

An audit trail covering all PHI access is a baseline requirement. SOC 2 Type II is expected for enterprise health system buyers. For international deployments, GDPR imaging data requirements add consent and data residency obligations on top of HIPAA.

The BAA Chain Is Longer Than Most Health Tech Products

The HIPAA compliant app development BAA chain for AI imaging documentation is unusually long:

- Cloud hosting (AWS, GCP, Azure): BAA available from all three.

- DICOM storage: Google Cloud Healthcare API, AWS HealthImaging, Azure DICOM service all offer BAAs.

- AI inference APIs: BAA required. Confirm scope covers image data, not only text.

- Report generation LLM: BAA required if structured findings containing PHI are sent to the API.

The imaging scope trap, validated by current contracts in market:

- OpenAI (via OpenAI for Healthcare): BAA covers Zero Data Retention (ZDR) eligible endpoints only. Image inputs are NOT ZDR-eligible. OpenAI’s BAA does not cover image inputs for PHI use as of 2026.

- Azure OpenAI: BAA covers text inputs explicitly per Microsoft’s published documentation. Image inputs not extended.

- Anthropic Claude: HIPAA-ready Enterprise plans with BAA via direct API, AWS Bedrock, Google Cloud, or Azure. The only major foundation model available across all three cloud providers with HIPAA-compliant infrastructure.

The bottom line: a BAA with cloud AI provider coverage for clinical text does not extend to DICOM image files automatically. Confirm in writing that the BAA covers imaging data before sending pixels.

FDA Classification for AI Imaging Documentation: A Tiered Reality

FDA classification for AI imaging documentation depends on what the AI output is used for and whether it requires clinician interpretation before clinical action. Context: 1,451 cumulative FDA-authorized AI/ML-based SaMD devices through end-2025. Radiology: 76% of authorizations. 510(k) clearance: 97%. De Novo pathway: ~3%. PMA: <0.5%. If you need health AI FDA clearance, understanding which tier you fall into is the first architectural decision.

Non-Device Software: When You Don’t Need Clearance

- Image display and enhancement only: software displaying DICOM images with windowing/leveling and measurement tools, without AI-generated findings, is general-purpose image processing, not a medical device.

- Workflow management: worklist prioritization, study routing, and template selection driven by DICOM metadata (not AI inference on image content) are administrative functions.

- Structured data entry tools without AI inference: documentation software, not a medical device.

- De-identified research viewers: no clinical decision output means no device classification.

Computer-Aided Detection/Diagnosis (CAD): The 510(k) Path

Computer-aided detection software has been regulated as Class II under 21 CFR 892.2050 since 1998. Modern AI-based computer-aided diagnosis follows the same pathway.

Intended use matters more than technology. If your AI inference output is presented to a radiologist as a “second read” finding, it is CAD regardless of model architecture. Established predicates exist in mammography (iCAD, Hologic), chest X-ray (Qure.ai, Lunit), and CT colonography. Predicate device identification is step one, and substantial equivalence requires clinical validation data. Indications for use must match the predicate’s scope.

Software As A Medical Device (SaMD): Higher Risk Tiers for Autonomous AI

FDA SaMD at the highest risk covers two categories: autonomous AI (no required radiologist review) and AI that outputs treatment recommendations (biopsy recommended, surgery indicated).

De Novo pathway precedents for AI imaging:

- LumineticsCore (formerly IDx-DR, Digital Diagnostics): De Novo April 2018, Class II. First autonomous AI diagnostic system. Pivotal trial: sensitivity 87.2%, specificity 90.7%.

- Viz.ai LVO: Computer-Aided Triage and Notification (CADt), De Novo February 2018. Product code QAS, regulation 21 CFR 892.2080. 220M+ lives, 1,400+ hospitals.

- Other DR autonomous AI: EyeArt (Eyenuk, 510(k) 2020 using LumineticsCore as predicate), AEYE Diagnostic Screening (2022).

Builder alert: a review queue designed for one-click approval in 3 seconds is functionally autonomous from a regulatory standpoint. Design review for genuine clinician engagement. The clinical decision support exemption does not apply when AI output drives clinical action without meaningful physician intermediation.

Predetermined Change Control Plans: The 2024 Mechanism for AI That Retrains

FDA finalized guidance on Predetermined Change Control Plans (PCCPs) for AI-Enabled Device Software Functions on December 4, 2024. This replaces the “every model update needs a new 510(k)” trap.

PCCPs let manufacturers define expected modifications, validation protocols, and potential performance impacts upfront. FDA pre-authorizes the plan as part of the initial marketing application. Subsequent changes within the approved PCCP do not require a new 510(k) or PMA supplement. Directly relevant for AI imaging products that retrain on production data.

Note on 21 CFR Part 820: as of February 2, 2026, retitled Quality Management System Regulation (QMSR), incorporating ISO 13485:2016 by reference.

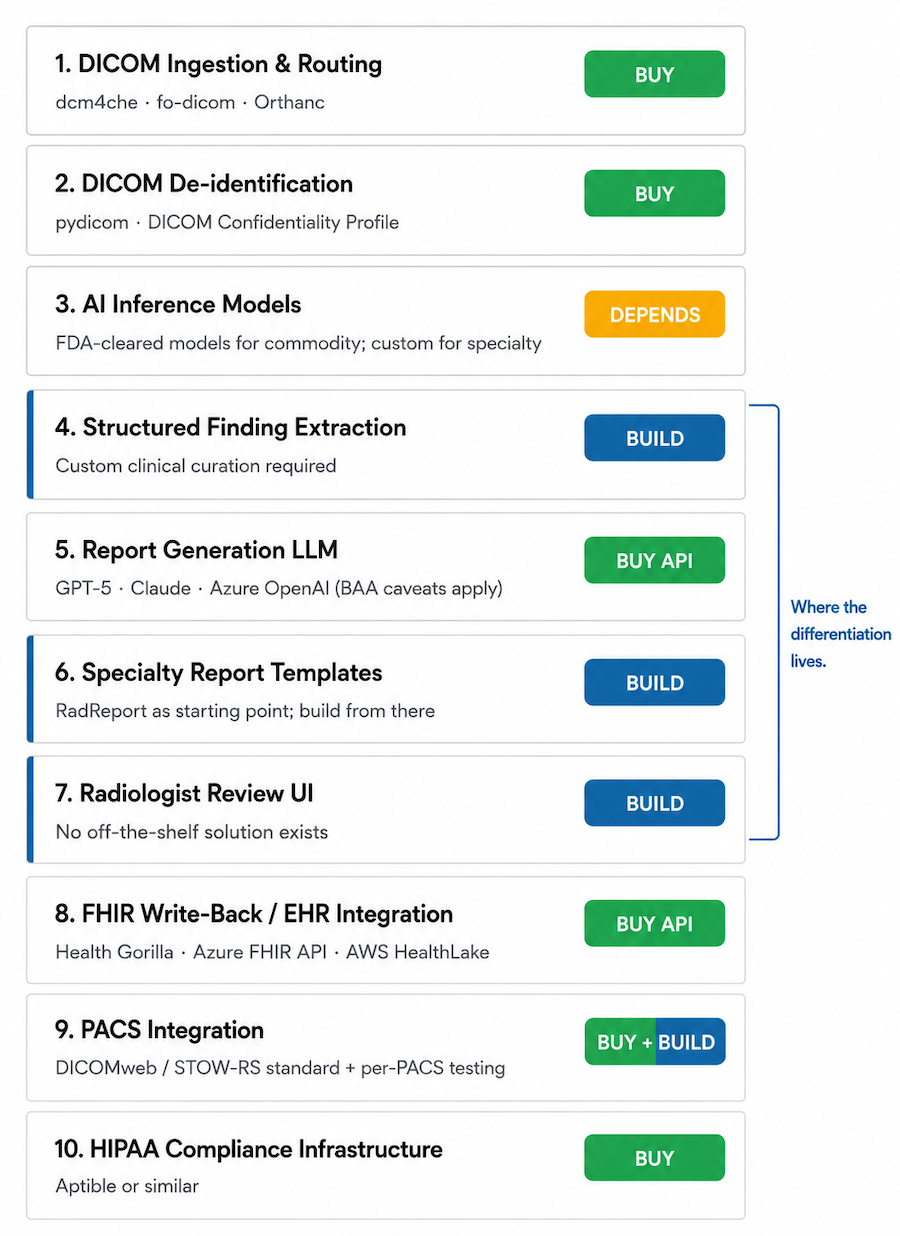

Build-vs-Buy Decision Matrix: What to Build, What to Use as an API

Not every component of a medical image AI documentation software system deserves bespoke build.

| Capability | Build Argument | Buy / API Argument | Recommendation |

|---|---|---|---|

| DICOM ingestion and routing | Full control; no PHI transmission | dcm4che, fo-dicom, Orthanc handle edge cases custom code misses | Buy (open-source toolkit) |

| DICOM de-identification | Full control; no PHI exposure | pydicom; mature, well-tested | Buy (open-source library) |

| AI inference models | Competitive moat; specialty accuracy; IP | Massive dataset requirements; FDA-cleared models provide coverage | Depends – buy for commoditized modalities; build for specialty differentiation |

| Structured finding extraction | Specialty schema; ontology IP | LLMs help, but ontology mapping requires specialty curation | Build (clinical curation required) |

| Report generation LLM | No PHI transmission; lower cost at scale | GPT-5/Claude quality hard to match; BAA with caveats (Section 8) | Buy API |

| Specialty report templates | Core IP; clinical differentiation | No off-the-shelf library; RadReport is a starting point | Build |

| Radiologist review UI | Core product experience; differentiator | No off-the-shelf solution; review UX is where products win or lose | Build |

| FHIR write-back / EHR integration | Custom per EHR target | Health Gorilla, Azure FHIR API, AWS HealthLake; SMART on FHIR | Buy API |

| PACS integration | Per-PACS DICOM SR write-back | DICOMweb reduces custom work; most modern PACS support STOW-RS | Buy (standard) + Build (per-PACS testing) |

| HIPAA compliance infrastructure | Full control | Aptible or similar; saves significant time early-stage | Buy |

Where the Differentiation Investment Goes

Highest-impact build investment: the review interface and the specialty-specific finding schema and template library. Established players (Nuance PowerScribe, Sectra) are most constrained by legacy architecture here. Specialty-focused entrants build superior products for specific workflows. DICOM pipeline and compliance are the entry ticket. Review UX and specialty depth are the product.

The AI Imaging Documentation Build Checklist

Before deploying AI clinical imaging documentation with real patient studies, validate against this checklist.

DICOM Pipeline and Ingestion

- DICOM C-STORE SCP or DICOMweb STOW-RS endpoint operational and tested against target PACS.

- DICOM metadata parsing validated for all target modalities.

- Pixel preprocessing pipeline validated per modality (HU windowing for CT; stain normalization for WSI; intensity normalization for MRI).

- DICOM de-identification tested: header tags AND burned-in pixel PHI detection for applicable modalities.

- BAA chain complete for all DICOM storage and processing services.

AI Inference and Finding Structuring

- AI models validated on representative clinical data from the target environment, not just benchmark datasets.

- Confidence thresholds calibrated and clinically validated.

- Structured finding extraction step implemented between inference and report generation (not raw inference to LLM).

- Hallucination testing: 50+ studies reviewed for report findings not supported by inference output.

- Out-of-distribution detection: behavior on unusual studies and imaging artifacts tested.

Report Generation and Review UX

- Report generation temperature set to 0.0–0.1 for clinical consistency.

- Specialty-specific templates reviewed by at least one practicing clinician.

- Finding-to-image linking implemented in the review UI (click finding → navigate to source image location).

- Review time benchmarked on representative study types (target: < 60 seconds for normal studies).

- DICOM SR and/or FHIR DiagnosticReport write-back tested against target PACS and EHR.

Regulatory and Compliance

- FDA intended use statement drafted and reviewed by regulatory counsel.

- FDA classification determination documented: non-device, CAD 510(k), or De Novo/PMA pathway.

- PCCP drafted if AI model retraining is part of the post-deployment plan.

- HIPAA risk assessment completed; BAA chain documented (DICOM storage, AI inference APIs, report generation LLM, cloud hosting). Verify BAA scope covers image data, not only text. If the platform also ingests data from connected clinical devices (e.g., wearable technology in healthcare), the BAA chain extends to those data streams too.

- Audit logging implemented for all PHI access: study access, report generation, radiologist review events, report approval.

- SOC 2 Type II roadmap in place if targeting enterprise health system buyers.

Why Choose Topflight for AI Medical Image Documentation Development

Topflight builds HIPAA-compliant clinical AI applications, including imaging documentation platforms, EHR integrations, and specialty-specific clinical workflow tools. We understand the full technical stack because we’ve built against it: DICOM pipeline architecture, AI inference integration, LLM-based structured report generation, FHIR write-back, and the compliance and FDA classification requirements that clinical deployment demands.

- DICOM pipeline architecture: ingestion (DIMSE and DICOMweb), de-identification per DICOM PS3.15, modality routing, pixel preprocessing by modality.

- AI inference integration: model evaluation and selection, confidence threshold design, finding structuring from inference output, multimodal report generation.

- Specialty report templates: radiologist-reviewed templates for radiology, pathology, ophthalmology, and dermatology use cases.

- EHR and PACS integration: FHIR R4/R5 DiagnosticReport and ImagingStudy write-back, DICOM SR, SMART on FHIR, Epic Radiant and Cerner RadNet integration.

- HIPAA compliance for imaging PHI: DICOM de-identification pipeline, burned-in PHI detection, BAA chain management for imaging AI services.

- FDA classification guidance: mapping your AI inference and report generation features to the correct regulatory tier before build, not after.

Building an AI-powered imaging documentation product or adding automated reporting to an existing imaging platform? Talk to Topflight about your modality focus, your EHR and PACS targets, and your FDA regulatory strategy.

Frequently Asked Questions

Does AI-generated radiology reporting software need FDA clearance?

Depends on intended use. Display-only software without AI inference is non-device. AI presenting findings for radiologist review is CAD (510(k), Class II). Autonomous AI without required clinician review requires De Novo or PMA.

What FHIR resources are used to write an AI-generated imaging report to an EHR?

FHIR DiagnosticReport for the report itself, ImagingStudy for the procedure reference, Observation for individual structured findings, and ImagingSelection (R5) for linking findings to specific image regions.

How do I prevent AI hallucinations in generated radiology reports?

Use a two-step architecture: extract structured findings from AI inference, then generate the report from those structured findings rather than the raw image. Set LLM temperature to 0.0–0.1.

Which imaging modalities are best suited for AI-assisted report generation?

High-volume modalities with established structured templates work best: chest X-ray, mammography, retinal photography for diabetic retinopathy. Specialty modalities (pathology WSI, dermoscopy) require dedicated schema investment.

How long does it take to build an AI imaging documentation platform?

A working demo takes weeks. A production system with DICOM pipeline, FDA classification, EHR integration, and validated review UX takes 12–24+ months depending on modality scope and regulatory pathway.