A founder came to us with a prototype that looked far more mature than most pre-seed health apps. On the surface, it was exactly the kind of product that makes health app regulatory compliance feel like a tomorrow problem: built fast with Claude, polished enough for demos, and already headed toward conversations with hospital groups and payors. He had a developer handoff document, a clear product vision, and the kind of momentum founders dream about.

What he did not have was a sober read on what some of his core features actually meant once they landed in a hospital legal review.

That is the trap with AI coding tools like Claude, Lovable, Cursor, and Replit. They are excellent at helping you turn vibe coding prompts and product requirements into an AI-generated app that looks like working software. They are terrible at warning you when a feature quietly crosses from “smart wellness product” into “possible FDA question,” or when your data flow turns a harmless-looking prototype into a HIPAA problem. And those issues usually do not show up when the app is being built. They show up later, when the stakes are higher and the rebuild is more expensive.

This article is the fast triage layer: not another deep dive into one framework, but a single-pass guide to the regulatory tripwires most likely to derail an AI-built health app before enterprise traction ever starts.

I built a health app with Claude (Anthropic), Lovable, or Cursor. What regulatory issues could I have?

If your app interprets health data, scores risk, or recommends treatment, you may have an FDA problem. In plain English, that is the AI built health app FDA question founders usually ask too late. If it stores or transmits health information, you may have a HIPAA or FTC problem. And if none of that was scoped before a developer handoff or enterprise pitch, the first place you may discover it is in hospital legal review.

Key Takeaways

- AI coding tools can help you build a convincing health app prototype fast, but they will not tell you when a feature quietly creates FDA, HIPAA, or enterprise-review risk.

- The real problem often shows up late: not during prototyping, but when a hospital legal team, payor, or investor starts asking what the app actually does and where the data goes.

- The smartest path is usually not “build less,” but “sequence better”: launch a defensible V1, then add higher-risk clinical functionality in V2 with the right regulatory plan behind it.

Table of Contents

- Why AI-Built Health Apps Have a Specific Regulatory Blind Spot

- The Features That Get Flagged — A Vibe-Built App Triage

- What Actually Happens in a Hospital Legal Review

- The Fast Triage — Which Framework Applies to Your App

- The V1/V2 Path — Getting to Market Without Building a Liability Trap

- Why Topflight for Regulatory Scoping and Health App Development

Why AI-Built Health Apps Have a Specific Regulatory Blind Spot

AI coding tools can help you build faster. What they cannot do is make FDA health app compliance decisions for you. That is the blind spot: a prototype can look polished, useful, and investor-ready while still carrying feature-level risk that nobody notices until a hospital legal team or enterprise buyer starts asking harder questions.

Intended Use Matters More Than the Code

Regulators do not care whether a feature came from Claude, Cursor, or Lovable. They care what the app is supposed to do.

If your product helps users interpret symptoms, identify medication issues, or respond to biometric changes, you may be moving into regulated territory. That line comes from FDA digital health guidance and the 21st Century Cures Act, not from how impressive the prototype looked in a demo.

The first question is not “what built this?” It is “what claim is this feature making?”

Useful Outputs Can Become Risky Outputs

AI tools are good at making software more helpful by making outputs more specific. In health apps, that can become a problem fast.

Risk usually rises when the app starts to:

- grade medication conflicts

- perform risk scoring on symptom severity

- generate patient-specific recommendations

- produce a treatment recommendation from health data

At that point, the app is no longer just displaying information. It may be acting more like clinical decision support (CDS) or even Software as a Medical Device (SaMD).

The Build Workflow Has No Natural Checkpoint

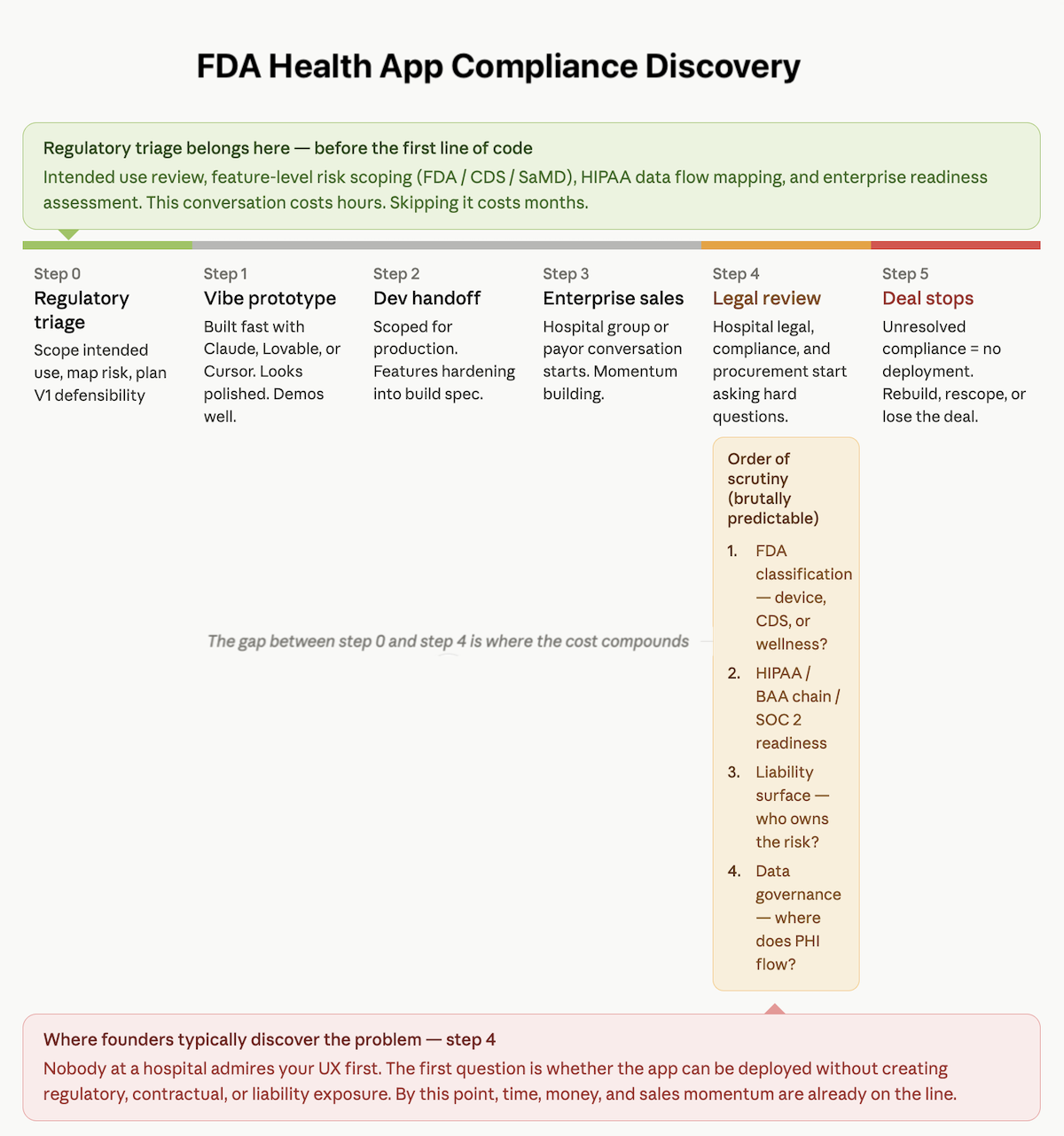

This is the real trap. A founder writes the prompt, the tool generates the prototype, and the dev shop scopes the build. But nobody in that sequence is automatically responsible for asking whether the feature set creates regulatory exposure.

That is why founders often discover the problem late, after time, money, and sales momentum are already on the line — usually before anyone has asked whether the stack is ready for enterprise-grade HIPAA compliant software development.

AI-built health apps do not fail because the tools are bad. They fail because no one stopped to do the regulatory triage early enough.

The Features That Get Flagged — A Vibe-Built App Triage

Most founders do not need another long explanation of every regulatory framework. They need a fast way to see which features are likely to trigger scrutiny, especially around symptom checkers, medication logic, and any wearable-triggered intervention.

That is where health app clinical decision support FDA risk usually stops being abstract and starts becoming painfully specific.

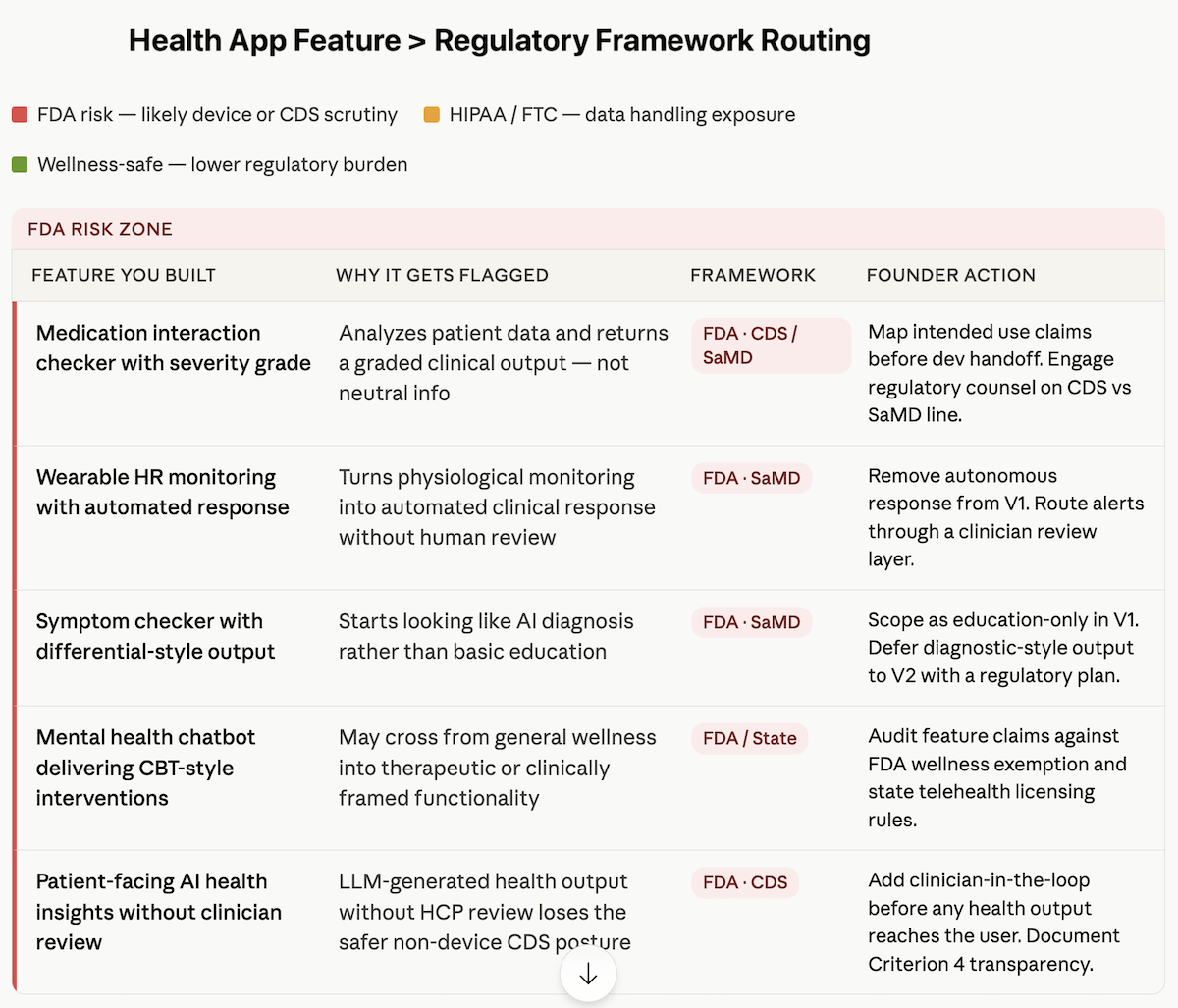

The table below maps the most common AI-built feature types to the tripwire they trigger and the Topflight article that covers that issue in depth.

| Feature you built with AI | Why it gets flagged | Framework | Go deeper |

| Medication interaction checker with severity grade | It analyzes patient-entered data and returns a graded clinical output, not just neutral information | FDA — CDS / SaMD | Is My Health AI a Medical Device? |

| Wearable heart rate monitoring app that triggers an automated response | It turns physiological monitoring into an automated clinical response without human review | FDA — SaMD | When Does an RPM App Become a Medical Device? |

| Symptom checker with differential-style output | It starts looking like AI diagnosis rather than basic education | FDA — SaMD | Is My Health AI a Medical Device? |

| Mental health chatbot delivering CBT-style interventions | It may move beyond general wellness into therapeutic or clinically framed functionality | FDA / state-level issues | I Built a Therapy Chatbot with Cursor |

| Patient-facing AI-generated health insights | An LLM-generated health recommendation delivered without clinician review can lose the safer CDS posture | FDA — CDS | You Added AI to Your Health App |

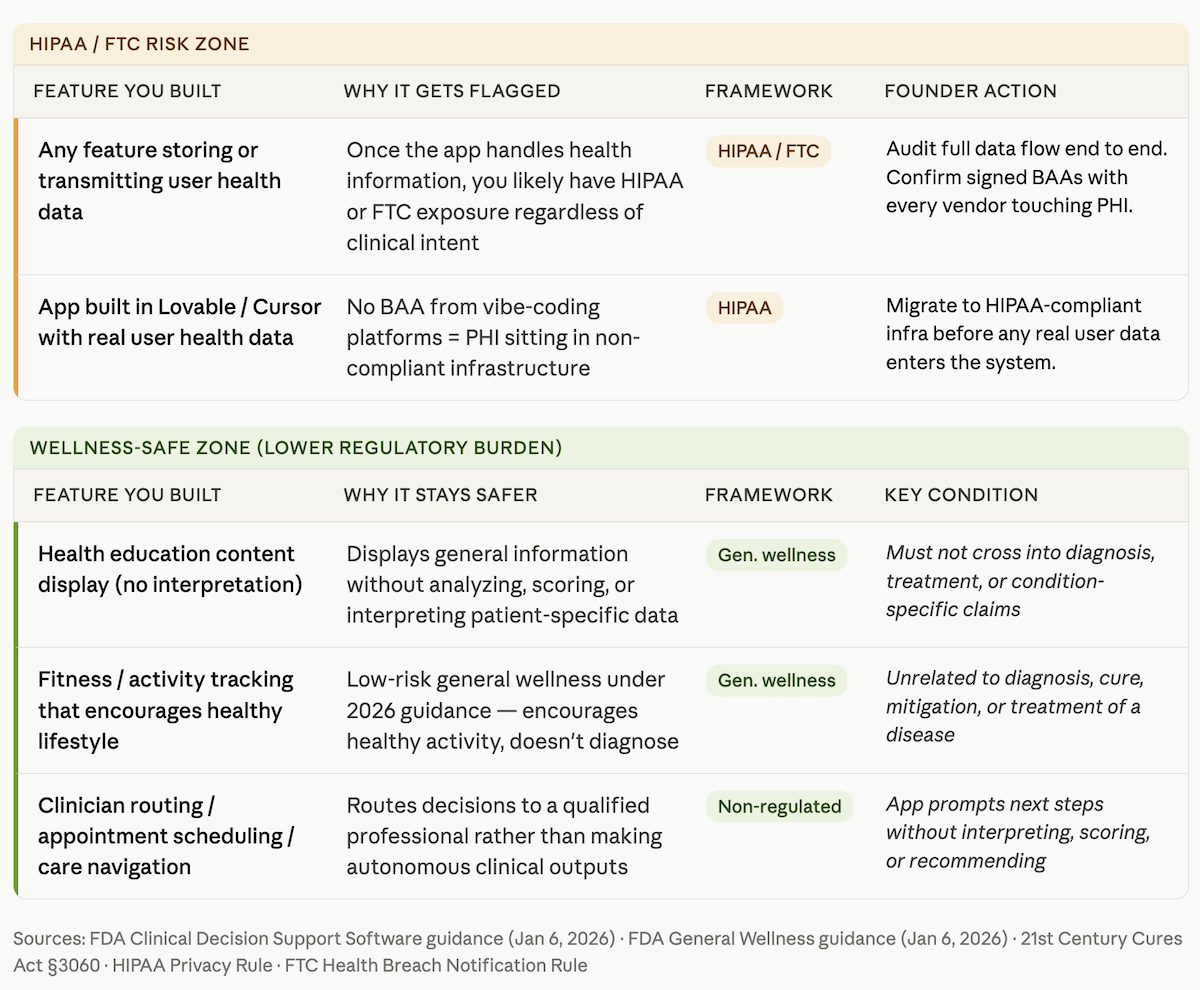

| Any feature storing user health data | Once the app handles health information, you likely have HIPAA or FTC exposure | HIPAA / FTC | HIPAA Compliance for AI-Generated Code |

| App built in Lovable/Cursor with real user health data | No BAA from Lovable/Cursor = PHI in non-compliant infrastructure | HIPAA | Turn Your Lovable Prototype into a HIPAA-Compliant Health App |

Founder Alert: Disclaimers Do Not Save You

“This is not medical advice” is not a magic spell. It does not restore a general wellness exemption, preserve a CDS exemption, or buy you FDA enforcement discretion. If the product behaves like it is interpreting health data, scoring risk, or suggesting treatment, regulators and enterprise reviewers will look at the feature itself, not your fine print. That applies whether the output came from a custom model, a medication interaction checker, or a Claude prompt stitched into your backend.

That is the broader pattern: the more specific, patient-facing, and actionable the output becomes, the less room you have to argue that the app is “just informational.” And that is usually where founders discover they were building a regulated product without budgeting for one.

What Actually Happens in a Hospital Legal Review

This is usually the moment founders realize they were preparing for the wrong meeting. In a health system pilot or payor review, nobody starts by admiring your UX or asking how fast Claude helped you ship.

The first question is whether the app can be deployed without creating regulatory, contractual, or liability exposure. That is where HIPAA compliant health app development stops being a nice phrase on a website and becomes a live test.

The Order of Scrutiny Is Brutally Predictable

1. FDA Classification

The first issue is whether the app looks like a medical device, clinical decision support tool, or something still defensible as wellness software. If that answer is unclear, the deal slows down immediately. Nobody at a hospital wants to discover halfway through procurement that they are piloting software with unresolved FDA questions.

2. HIPAA, Business Associate Agreement (BAA) Requirements, and SOC 2

Next comes the infrastructure check. Where does data go? Which vendors touch it? Do you have signed BAAs across the stack? Is there a risk assessment? For many enterprise digital health buyers, lack of SOC 2 certification may not kill the conversation on the spot, but it absolutely makes you look like a company that showed up to investor diligence health app talks wearing a hoodie that says “trust me, bro.”

For the broader policy picture, see AI in healthcare compliance.

3. Liability Surface

Then legal asks the uncomfortable question: if a user acts on an output and something goes wrong, who owns that risk? Apps that display information create a smaller liability surface. Apps that interpret, score, or recommend action create a bigger one.

4. Data Governance

Finally, reviewers look at what data is collected, where it flows, whether it includes protected health information (PHI), and whether any third party sits outside the approved environment. If those answers are fuzzy, health system procurement gets nervous fast.

That is the punchline founders hate: these questions are answered before the feature conversation really starts.

The Fast Triage — Which Framework Applies to Your App

If you want a fast read on whether you are looking at a digital health app FDA clearance issue, a HIPAA issue, or both, start with three questions. This is not a full regulatory triage. It is the fastest way to figure out which conversation you need next.

1. Does Your App Tell People What Their Health Data Means?

If the app does more than display information — if it interprets, scores, prioritizes, or draws a conclusion about a condition — you likely have an FDA question. That is the core line in the wellness app vs medical device software debate: display is safer; interpretation creates risk.

2. Does the App Produce Health-Related Output Without Clinician Review?

If a feature delivers a suggestion, risk score, or next-step guidance directly to the user, the issue is not just whether the output is helpful. It is whether the product’s clinical intended use now looks more like recommendation than simple support. In health apps, “display vs recommend” is where the calm demo often turns into a regulatory meeting nobody budgeted for. That line was sharpened again in the January 2026 CDS guidance.

3. Does the App Store, Process, or Transmit Health Information?

If yes, you almost certainly have a privacy and compliance question. In provider-connected workflows, that usually means HIPAA. Outside that context, the FTC Health Breach Notification Rule may still matter.

That is the practical shortcut: first identify whether your problem is interpretation, autonomous output, or data handling. Then go deeper in the right place.

The V1/V2 Path — Getting to Market Without Building a Liability Trap

The smartest founders do not solve the wellness app vs medical device software problem by pretending the line does not exist. They solve it by sequencing the product correctly. That means a V1 that is narrow, defensible, and commercially useful, followed by a V2 that tackles higher-risk clinical functionality with the right budget, evidence, and regulatory path behind it.

V1: Stay Useful Without Becoming Reckless

For an MVP health app, the goal is not to neuter the product. It is to keep the core value while removing the features most likely to trigger avoidable scrutiny. In practice, that usually means the app can:

- display information

- educate users

- prompt next steps

- route decisions to a clinician or other qualified professional

What it should not do in V1 is interpret, score, or autonomously recommend action in a way that turns “helpful” into “regulated.” That is the heart of good MVP regulatory scoping: preserve momentum without building a liability trap into the first release.

V2: Add Clinical Depth When the Business Can Support It

Once you have traction, revenue, or a real pilot path, the conversation changes. Now the move from prototype to production can include a serious regulatory workstream, because there is an actual business case to support it.

That is the practical V1 vs V2 regulatory strategy. V1 proves demand and keeps enterprise conversations alive. V2 adds the more ambitious features once you are ready to handle the consequences that come with them.

That is also the logic behind Vibe to Traction: do not confuse “we can build it” with “we should launch it exactly that way.”

Why Topflight for Regulatory Scoping and Health App Development

Topflight is not the team you call to admire your prototype politely and then build the same risk into production with nicer UI. We help founders figure out what they actually have before the dev handoff, investor diligence, or enterprise meeting turns into a more expensive conversation.

That usually means four things:

- intended use review before features harden into scope

- feature-by-feature risk scoping for FDA and CDS exposure

- HIPAA stack readiness, including BAA chain management and data flow mapping

- enterprise readiness for legal, compliance, and procurement review

For founders looking for experienced healthcare app developers, that matters because good HIPAA compliant app development starts before code is rewritten. It starts when someone tells you which features belong in V1, which belong later, and which may turn the product into a SaMD health app before you are ready for one.

Frequently Asked Questions

Does my health app need FDA approval?

Not necessarily. The question is whether the app interprets health data, makes clinical claims, or recommends action in a way that moves it beyond simple wellness or information display.

What is the difference between a wellness app and a medical device?

A wellness app generally encourages healthy behavior or displays information. A medical-device-style app starts interpreting data, scoring risk, or influencing diagnosis, treatment, or clinical decisions.

Does “this is not medical advice” protect my app from FDA regulation?

No. A disclaimer does not override what the feature actually does or how it is presented. Reviewers look at product behavior and intended use, not just fine print.

My app was built with Claude. Does that create any specific compliance issues?

The issue is usually not Claude itself. The problem is that AI coding tools can help generate risky features without flagging regulatory or data-handling consequences early enough.

What health app features are most likely to trigger FDA scrutiny?

Common examples include symptom checkers with diagnostic-style output, medication conflict grading, patient-specific recommendations, and automated responses triggered by biometric or wearable data.