Monday morning. Sprint planning. Somebody shares the screen and walks the team through a new AI feature for the health app — a smarter symptom summary, a next-best-action prompt, a patient-facing insight layer. Heads nod. It looks useful. It looks shippable. Someone asks the compliance question everyone knows to ask: “We’re good on HIPAA, right?” Legal says the BAA is signed. Engineering says the data stays inside the approved stack. Product moves to timeline and scope. By Friday, the feature feels real.

And that’s usually when the wrong kind of confidence sets in.

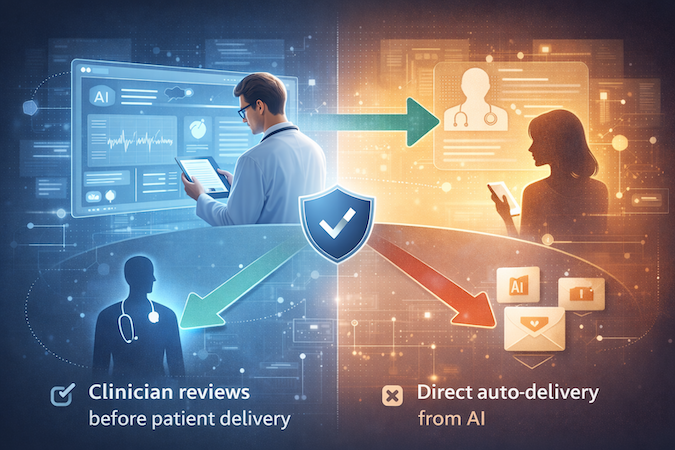

Because the question that matters most often never gets asked: when this AI produces something meaningful, who sits between that output and the patient? A clinician? An ops team member? Nobody? That detail sounds small when everyone is staring at velocity charts and release dates. It is not. It is the line between an assistive feature and a compliance problem your team may only recognize later, when procurement, legal, or a regulator takes a closer look.

| Quick Question: If our AI vendor signed a BAA, are we covered from a compliance standpoint?

Quick Answer: Not necessarily. A BAA addresses how health data is handled. It does not determine whether your AI feature may be regulated based on what it does and whether a qualified clinician reviews the output before it reaches the patient. In practice, the make-or-break question is simple: who sits between the AI and the patient? |

Key Takeaways

- A signed BAA is not the finish line. HIPAA and FDA device regulation are different frameworks asking different questions. You can be fully covered on data privacy and still create regulatory risk through product design.

- FDA cares about intended use and human review. What you claim the feature does, and whether a qualified clinician must review the output before patient delivery, matters far more than how advanced the model sounds in a demo.

- Provider review should be the default gate. Auto-routing patient-specific insights based on confidence scores may feel efficient, but it weakens the exact control point that makes the feature easier to defend.

- The BAA Is Not the Finish Line

- What FDA Actually Cares About With AI

- The Provider-in-the-Loop Design Pattern

- The Confidence Scoring Trap

- What This Means for Your Roadmap

- The Meeting Looks the Same Until One Question Changes It

The BAA Is Not the Finish Line

Most teams treat a signed BAA like the compliance finish line. It is not. A BAA covers how health data is handled between you and a vendor. It does not answer whether your AI feature may be regulated as a medical device.

Two Different Compliance Questions

- HIPAA asks: Is protected health information being handled properly?

- FDA asks: What does the software actually do, and could that function affect patient care or create clinical risk?

These are separate frameworks enforced by separate agencies. HIPAA sits under HHS. Medical device regulation sits with the FDA.

Where Teams Get Tripped Up

A team can be:

- fully covered on data privacy

- using a vendor willing to sign a BAA

- following internal security rules

…and still be shipping an AI feature that raises FDA questions.

That is because a BAA solves a vendor privacy issue. It does not solve a product function issue.

The Distinction That Matters

You can be 100% HIPAA compliant and still ship an uncleared medical device.

Most digital health teams know how to talk about HIPAA because that is the framework that shows up early. FDA software questions often enter the conversation later, when the feature is already built, sold, or in procurement review. That is too late.

What FDA Actually Cares About With AI

FDA does not start with the model type. It starts with two questions: what is the software intended to do, and who reviews the output before it reaches the patient?

What Is the Software Intended to Do?

- General-purpose AI with no clinical claim: usually not the issue

- AI built to generate patient-specific insights about a named condition from health data: potentially a device

The key is not what the model could do in theory. It is what you built, labeled, and marketed it to do. The moment your product language says something like “personalized AI insights about your Parkinson’s symptoms,” the intended use is no longer vague. It is declared.

Who Reviews the Output Before It Reaches the Patient?

FDA’s non-device CDS exemption depends on whether a qualified clinician can independently review the basis for a recommendation and apply their own judgment before anything reaches the patient.

That means clinician review cannot be optional. The reviewer must be able to understand the basis for the insight and approve, modify, or reject it.

The Practical Line

- AI supports a clinician, who reviews before delivery → safer regulatory position

- AI delivers patient-specific insights directly to the patient automatically → riskier position

That is the test. FDA cares far less about how clever the model is than about what you told the market it does and whether a qualified clinician stands between its output and the patient.

The Provider-in-the-Loop Design Pattern

“Provider in the loop” is not a regulatory abstraction. It is a workflow choice: does every AI-generated insight stop with a clinician before it reaches the patient, or not?

The Wrong Implementation

This is the pattern many teams build because it feels efficient:

- AI generates patient-specific insights

- high-confidence insights go directly to patients

- lower-confidence insights go to a provider queue

- unreviewed items expire or auto-release

The problem is not the AI. The problem is that provider review stops being the default control point. Once patient-facing delivery depends on model confidence instead of mandatory clinical review, the case that the software is merely supporting a clinician gets much weaker.

The Right Implementation

The safer pattern is simpler:

- all AI-generated insights go to a provider queue by default

- a provider reviews each insight before delivery

- the provider can approve, modify, or suppress it

- the patient never receives an AI-generated insight a clinician has not touched

That is what provider in the loop means in practice: clinician review is the gate every time, not a fallback for edge cases.

Where Teams Get Themselves in Trouble

The common workaround is to allow direct delivery for high-confidence outputs by default, or to enable it system-wide and let providers opt out later. That sounds subtle in a sprint ticket, but it materially changes the regulatory posture of the feature.

If high-confidence auto-routing exists at all, the safer position is for it to be:

- enabled explicitly by the provider

- limited to that provider’s patients

- never the system-wide default

The UI Matters Too

Providers need to see the basis for the insight, not just the AI’s conclusion. If they only see a polished recommendation without the underlying patient data or context, they are not exercising independent judgment.

Patients should also receive clearly attributed messages. Not “our AI thinks your symptoms are worsening,” but something closer to “Dr. X reviewed your recent data and shared this update.”

That is the dividing line. Provider review as the default gate signals assistive software. Provider review as a fallback starts pushing the feature toward autonomous clinical output.

Related: HIPAA-Compliant App Development Guide

The Confidence Scoring Trap

Auto-routing based on confidence scores feels like responsible AI engineering: if the model is highly confident, send the insight straight to the patient; if confidence is lower, route it to a provider. Efficient on paper. Risky in practice.

Why Confidence Is Not Clinical Judgment

A confidence score is an internal model metric. It tells you how sure the system is about its output, not whether that output is clinically appropriate for a specific patient at a specific moment.

- Model confidence = how sure the system is

- Clinical appropriateness = whether this should actually be delivered to the patient

Those are different questions.

What FDA Cares About Instead

FDA’s framework does not relax because the model says it is 94% confident. The real question is whether a qualified clinician reviews the output before it reaches the patient and applies judgment the software cannot.

That is why confidence-based auto-routing is a trap. Teams treat it like a safety mechanism. In regulatory terms, it often works more like a bypass.

The Context Problem

A high-confidence insight can still be the wrong thing to deliver directly. A provider may know the patient is under acute stress, going through a medication change, or likely to misinterpret the message. The model does not hold that context the way a clinician does.

That is the point of the human loop: not to second-guess the AI’s math, but to apply clinical context the AI structurally cannot have.

The Safer Rule

If the output is important enough to reach the patient, it is important enough to be reviewed by the provider first.

What This Means for Your Roadmap

The practical question is simple: what changes in the roadmap right now?

If You Haven’t Shipped Yet

Build provider review in as the default from day one. It is far cheaper to design the queue, review states, attribution, and provider controls in sprint one than to retrofit them after the feature is live.

If You’ve Already Shipped With Auto-Delivery

Revisit the workflow before you scale. That does not automatically mean crisis, but it does mean the current design is worth reviewing with a regulatory advisor before enterprise diligence, procurement, or partner review turns it into a harder conversation. If live now:

- disable auto-delivery by default

- route all outputs to provider review

- add approve / edit / suppress states

- show basis/context for each insight

- change patient-facing attribution

- review product claims / marketing language

- get regulatory review before scale-up

If You’re Building for a Client

Do not apologize for the provider-in-the-loop pattern. In serious healthcare environments, it is a strength because it shows the team understood the regulatory landscape before building, not after.

The Roadmap Rule

If the feature matters enough to build, it matters enough to structure correctly:

- provider review as the default

- no system-wide auto-delivery of patient-specific AI insights

- product language that reflects clinician-reviewed delivery

- architecture you can defend in diligence, not just in a sprint demo

The Meeting Looks the Same Until One Question Changes It

Monday morning again. Same sprint planning meeting. Same AI feature on the screen. Someone asks the familiar question: “We’re good on HIPAA, right?” Legal confirms the BAA. Engineering says the data stays inside the approved stack.

But this time, the meeting does not move on.

Someone asks the question that actually matters: who reviews this before the patient sees it?

Now the conversation changes. It is no longer just about velocity and scope. It is about workflow, provider approval, attribution, and what happens by default, not just in edge cases.

That is the fork in the road.

The difference between a product that survives enterprise review and one that stalls in procurement is often a single workflow decision made early.

The real question was never whether your AI is smart enough. It is whether your product keeps a qualified human between the model and the patient.

That is the question most teams still have not asked.

It is also the one that decides whether you built a feature or a liability.

Frequently Asked Questions

Does BAA with an AI vendor mean our AI feature is compliant?

No. A BAA addresses privacy and security obligations around protected health information, but it does not determine whether the feature creates a separate regulatory issue based on intended use and workflow design.

What does FDA care most about with AI in a health app?

Two things matter most: what the software is intended to do and who reviews the output before it reaches the patient. Those factors matter far more than the model type or confidence score.

Are confidence scores enough to justify auto-delivery to patients?

No. Confidence is an internal model metric, not proof that a patient-facing insight is clinically appropriate in context.

What is the safer product pattern for AI-generated insights?

Send all AI-generated insights to a provider queue by default, require review before delivery, and let the provider approve, modify, or suppress the message. The patient should not receive an AI-generated insight a clinician has not touched.

What if we already shipped auto-delivery?

Then the workflow is worth revisiting before you scale. It may not be a crisis, but it is the kind of design choice that will draw harder questions in enterprise, partnership, or investor diligence.